If you sit through a pitch from an enterprise AI vendor, you will be bombarded by a dizzying array of acronyms: NLP, NLU, NLG, LLMs. They are often used interchangeably by salespeople, leading to massive confusion for procurement and IT teams trying to understand exactly what they are buying.

To build a successful Conversational AI strategy, you must understand the underlying architecture. These acronyms represent three distinct, sequential phases of how a machine interacts with human language. Here is a clear, technical breakdown of the engine running under the hood of modern chatbots.

1. NLP (Natural Language Processing): The Umbrella Term

Definition: Natural Language Processing is the broadest category. It encompasses the entire field of Artificial Intelligence dedicated to allowing computers to process, analyze, and manipulate massive amounts of human language data.

If NLU and NLG are specific tools, NLP is the entire toolbox.

When a program checks your spelling in Microsoft Word, translates a document from French to English, or performs sentiment analysis on thousands of Twitter posts to determine if the public is "angry" or "happy" about a product launch, it is performing NLP.

In the context of a chatbot, NLP is the foundational layer that takes the messy string of characters a human typed (e.g., "im tryna cncel my ordr asap") and cleans it up so the deeper algorithms can attempt to analyze it.

2. NLU (Natural Language Understanding): Reading Comprehension

Definition: NLU is a highly specific sub-category of NLP. Its singular objective is understanding meaning and intent.

Human language is notoriously ambiguous. If a user types, "I need to freeze my card," a rigid keyword search might look for the word "freeze" and send the user to an article about winter weatherizing their house. An NLU engine understands the semantic context. It knows that when the word "freeze" is placed next to the word "card" in the domain of a banking app, the user's intent is to temporarily suspend a compromised debit card.

NLU performs two critical functions for enterprise bots:

- Intent Recognition: What does the priority goal of the user? (e.g., The goal is "Suspend_Card").

- Entity Extraction: What are the specific parameters attached to the goal? (e.g., Extracting the last four digits of the card or the date of the compromised transaction from the sentence).

Without NLU, you do not have an AI bot. You merely have a search box.

3. NLG (Natural Language Generation): The Voice of the Machine

Definition: NLG is the inverse of NLU. Once the machine understands what the user wants (NLU), and retrieves the data from the database, it must communicate the answer back to the human. NLG is the process of translating structured computer data into natural-sounding human sentences.

Imagine a user asks, "What is my account balance?" The NLU understands the intent. It queries the SQL database. The database returns raw JSON code: `{"account_id": 4829, "status": "active", "balance_usd": 1450.50}`.

If the bot just printed that raw JSON code to the chat screen, it would be useless to a non-technical customer. The NLG engine takes that structured data and generates a fluid sentence: "Your active account ending in 4829 currently possesses a balance of $1,450.50."

How LLMs Changed the Game

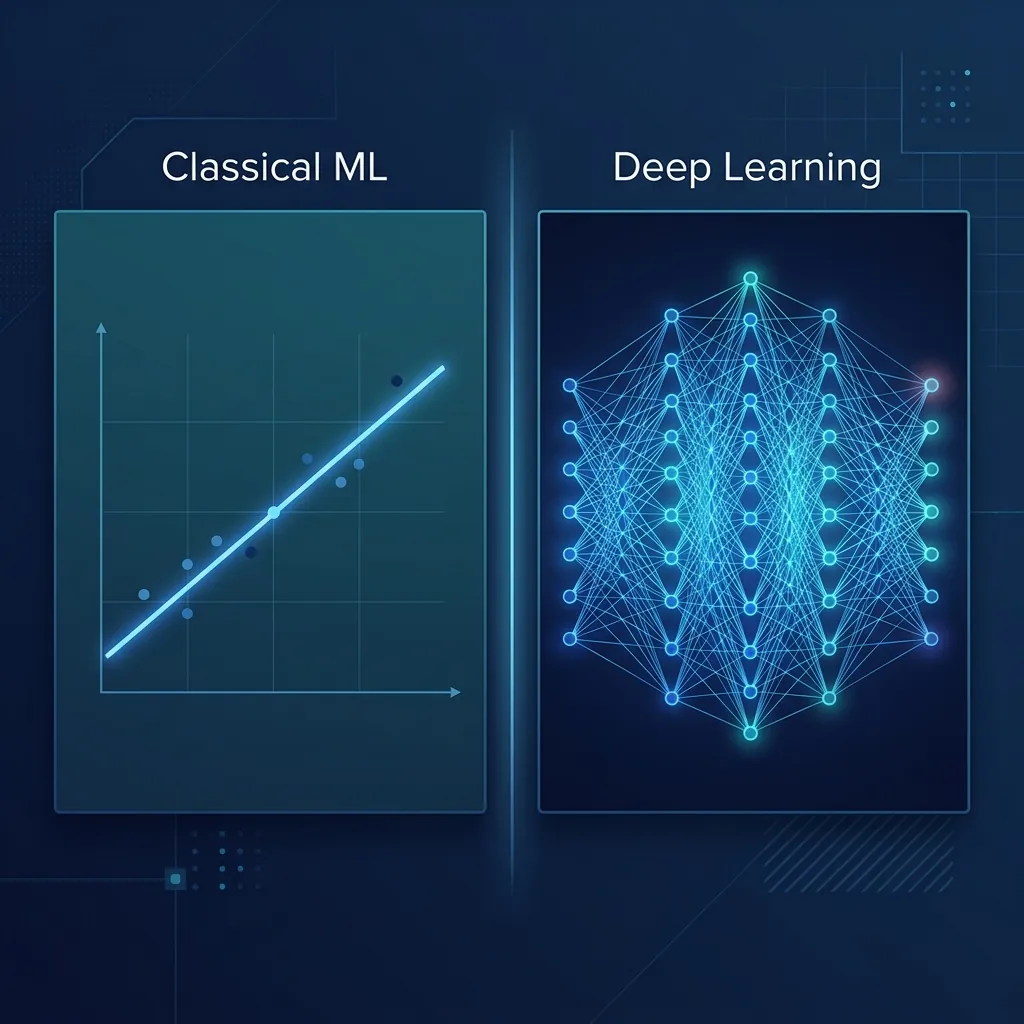

Historically, enterprise developers had to use different, highly specialized models to handle NLU and NLG separately. A team would spend months training an NLU classifier on thousands of specific intents, and then hard-code static template responses for the NLG phase.

The explosion of Large Language Models (LLMs) like OpenAI's GPT and Meta's LLaMA has revolutionized this architecture. An LLM is a massive neural network that handles NLP, NLU, and NLG simultaneously in a single, unified system. Because they are trained on vast sections of the entire internet, LLMs possess out-of-the-box NLU capabilities that far surpass traditional intent classifiers, and their NLG generation is practically indistinguishable from human writing.

Understanding these layers allows businesses to buy precisely the technology they need. Consult with the machine learning specialists at AdaptNXT to design the optimal NLP architecture for your specific data landscape.