Every enterprise leader who has experimented with a general-purpose AI model like GPT-4 has experienced the same moment of disappointment: the model knows everything about the world but nothing about your company. It cannot explain your specific product features accurately. It writes in a generic voice that does not match your brand. It hallucinates your own processes because it has never seen your internal documentation.

This is precisely the problem that LLM fine-tuning solves. And in 2026, it is no longer an esoteric research technique—it is a production-ready practice that leading enterprises are deploying to build AI systems that are genuinely, provably better for their specific business context.

What Fine-Tuning Actually Means

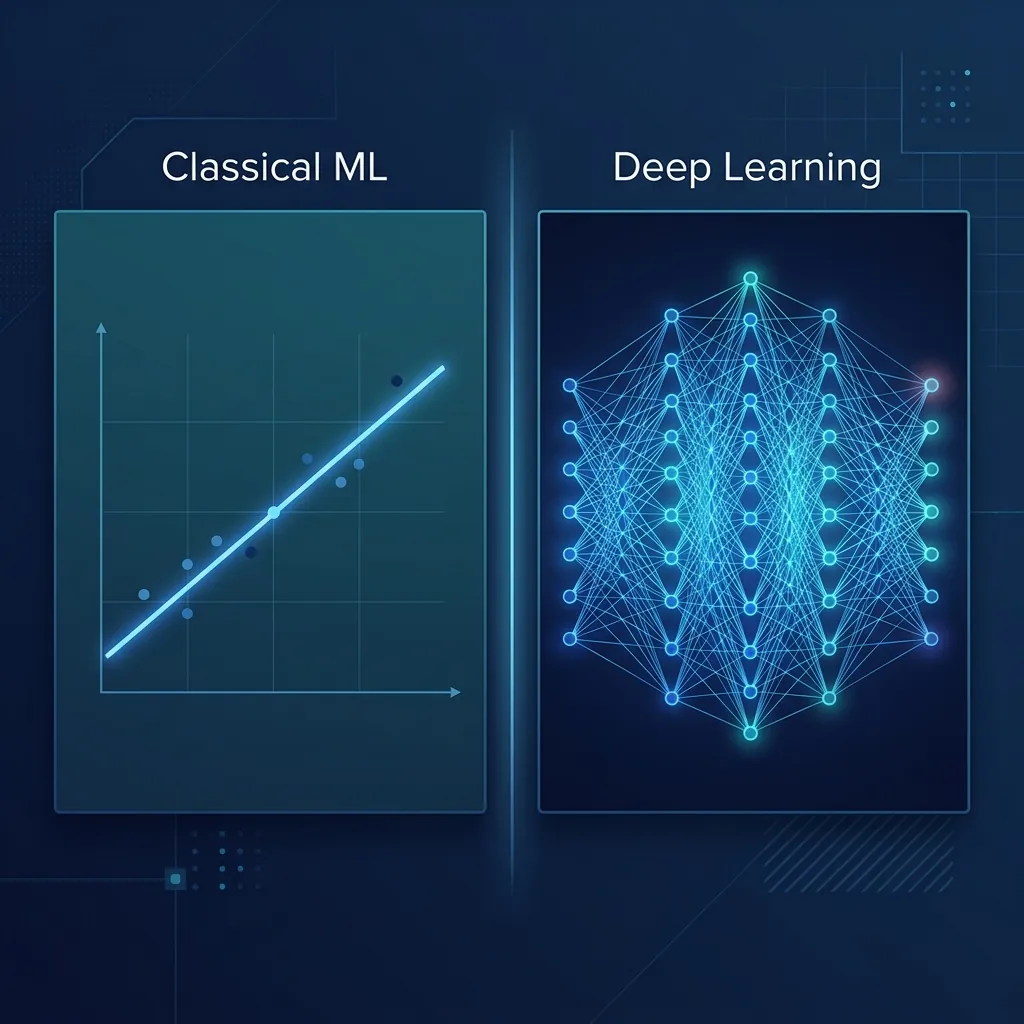

A base LLM (like Llama 3, Mistral, or GPT-4) is pre-trained on a massive corpus of internet text. It becomes extraordinarily good at general language tasks. Fine-tuning is the process of continuing to train that model—on your dataset—so it adjusts its internal understanding toward your domain.

Concretely, this means feeding the model thousands of examples of ideal inputs and outputs specific to your business. For a customer support application, you might fine-tune on 50,000 pairs of customer questions and expert human-written answers from your support team. After training, the model learns not just how to answer support questions generically, but how your best agents answer your customers' questions about your specific product.

Fine-Tuning vs. RAG: When to Use Which

This is the most common question from enterprise buyers, and the honest answer is that they solve different problems:

- Retrieval-Augmented Generation (RAG) is ideal when you need the AI to access current, frequently changing information—like a live product catalog or an up-to-date knowledge base. RAG connects the model to an external database at inference time. It is faster to set up but does not change the model's underlying behavior or tone.

- Fine-Tuning is ideal when you want to change the model's behavior—its tone, its reasoning style, its domain expertise, its output format. Fine-tuning is "baked in" to the model weights. It makes the model fundamentally smarter about your domain, not just better at looking things up.

Many production systems use both: RAG for accessing current information, and fine-tuning to ensure the model outputs in the right style and format for the retrieved information.

The Four Data Requirements for a Successful Fine-Tune

The quality of your fine-tuning is entirely determined by the quality of your training data. Before starting any fine-tuning project, you must ensure your dataset meets these four criteria:

- Volume: For most domain adaptation tasks, you need a minimum of 1,000 high-quality input-output pairs. For a significant shift in complex behavior (e.g., teaching a model to write legal briefs in your firm's exact style), 10,000–50,000 examples will produce meaningfully better results.

- Quality over Quantity: One thousand excellent examples beats ten thousand mediocre ones, every time. Noise in training data teaches the model bad habits that are difficult to correct.

- Diversity: Your training examples must cover the breadth of real-world scenarios the model will encounter in production. A customer support bot trained only on billing questions will fail when a user asks a technical troubleshooting question.

- Correct Formatting: Training data must be structured in the precise input-output format your fine-tuning framework expects. This is a technical step but a critically important one.

Modern Fine-Tuning Methods: LoRA and QLoRA

Full fine-tuning—updating every single parameter of a 70-billion parameter model—is prohibitively expensive for most enterprises. Thankfully, modern techniques have made the process dramatically more efficient:

LoRA (Low-Rank Adaptation) works by freezing the original model weights and inserting small, trainable matrices into the model architecture. You end up training only about 1% of the total parameters, at roughly 3-5% of the compute cost of full fine-tuning, while achieving 85-95% of the performance improvement. QLoRA takes this further by quantizing the base model to 4-bit precision, enabling fine-tuning of a large model on a single high-end GPU—something that would have required a cluster of servers just two years ago.

What Successful Fine-Tuning Looks Like in Practice

A mid-sized Indian manufacturing company fine-tuned a Llama 3 model on five years of their internal engineering support tickets, resolved incident reports, and technical manuals. The result was a private, on-premise AI assistant that could accurately diagnose equipment failures and recommend specific spare parts from their inventory catalog—something no general-purpose AI could do. Engineer resolution time for Tier 1 support tickets dropped by 60%.

This is the tangible business value of fine-tuning: AI that is not generic, but genuinely expert in your world.

If your organization has accumulated years of proprietary data—support tickets, clinical notes, legal briefs, engineering reports, sales conversations—you are sitting on the training data for a competitive advantage that no competitor can simply purchase. Talk to the AI team at AdaptNXT about how to unlock it.