The dominant narrative of the last decade of technology has been "Move Everything to the Cloud." Storage went to the cloud. Compute went to the cloud. And when the Artificial Intelligence boom began, it was naturally assumed that the massive neural networks driving the revolution would live exclusively in hyper-scale cloud data centers, fed by APIs.

However, physics has a way of ruining elegant architectural plans. As AI leaves the realm of text-generation and enters the physical world of robotics, autonomous vehicles, and industrial IoT, the latency of sending a signal to an AWS server 500 miles away and waiting for a response is no longer acceptable. The solution is Edge AI—taking the brain out of the cloud and embedding it directly into the physical device.

The Fatal Flaw of the Cloud: Latency

Consider an autonomous forklift navigating a busy warehouse floor. A human steps out from behind a racking aisle directly into the forklift's path. If the forklift relies on Cloud AI, here is the sequence of events:

- The onboard camera captures the image of the human.

- The forklift connects to the warehouse Wi-Fi.

- The image is transmitted to a cloud server in another state.

- The cloud GPU runs a deep learning object-detection model.

- The server recognizes "Human in path = apply brakes."

- The signal travels back over the internet to the local Wi-Fi.

- The forklift receives the signal and stops.

In optimal conditions, this round trip might take 200 milliseconds. But if the warehouse Wi-Fi drops for a fraction of a second, or a router resets, that round trip takes 2 seconds. The human is hit. Cloud AI is incredible for asynchronous tasks like drafting an email; it is a lethal liability for real-time physical operations.

What is Edge AI?

Edge AI solves the latency problem by processing data locally, right at the "edge" of the network where the data is generated. The Heavy lifting (the initial training of the AI model on millions of images) is still done in the massive cloud data centers. But once the model is trained, it is compressed, optimized, and deployed directly onto a small, ruggedized microchip (like a neural processing unit or an NVIDIA Jetson) installed physically inside the forklift.

When the human steps out, the local chip processes the image and applies the brakes in 10 milliseconds, entirely independent of Wi-Fi or internet connectivity.

The 4 Pillars Driving the Edge Revolution

1. Immediate Latency Reduction

Processing locally guarantees split-second reactions. This is the foundational requirement for surgical robots, self-driving cars, and high-speed manufacturing quality control cameras checking 1,000 components a minute on an assembly line.

2. Absolute Data Privacy (Zero Transmission)

Hospitals are deploying audio-recording devices in patient rooms, not to record conversations, but to use Edge NLP (Natural Language Processing) to listen for the sound of a patient falling out of bed. Because of HIPAA laws, sending a continuous audio stream of a patient's room to a cloud server is a massive privacy violation. With Edge AI, the device listens, processes the audio locally, and immediately deletes it. It only transmits a tiny data packet (the "Fall Detected" alert) to the nurses' station.

3. Massive Bandwidth Savings

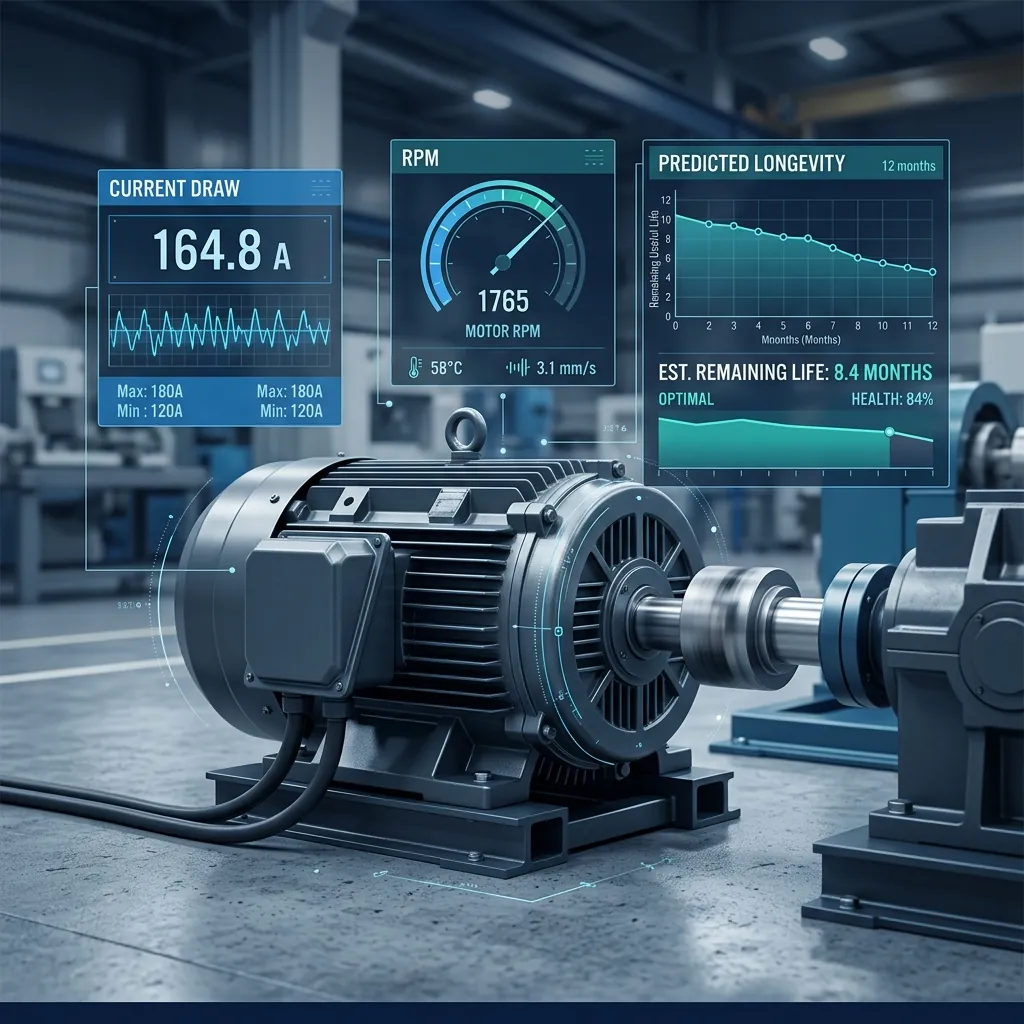

An offshore oil rig might have 4,000 IoT sensors monitoring pressure and temperature 100 times a second. Sending all that raw, "normal" data over an incredibly expensive satellite internet connection just to verify everything is fine is financial insanity. Edge AI analyzes the data locally. It only uses satellite bandwidth to transmit anomalies: "Pump 4 is vibrating outside normal parameters."

4. Resilience and Offline Autonomy

Agricultural drones surveying massive farms for crop disease using computer vision cannot rely on stable 5G connections in rural areas. They must be able to process the images, flag the diseased crops, and navigate autonomously entirely offline, only uploading the final report when they return to base.

The Hardware Catch-Up

The reason Edge AI is exploding now, and not five years ago, is hardware. The industry has finally developed highly efficient AI accelerator chips that draw minimal power (important for battery-operated devices) while outputting enough compute to run complex neural networks locally.

If your enterprise operations rely on physical machinery or real-time sensor data, relying on cloud ping times is a bottleneck you can no longer afford. Reach out to AdaptNXT's IoT engineering team to design a resilient, low-latency Edge AI architecture.