When your factory floor has 500 sensors streaming data every second, or your fleet of delivery vehicles needs real-time route optimization, where you process that data matters — enormously. The edge vs. cloud debate in IoT isn't just an architectural discussion. It's a decision that directly affects your latency, costs, reliability, and the business outcomes you can achieve.

In this guide, we break down both approaches, highlight their trade-offs, and give you a practical framework to decide what's right for your IoT deployment.

What Is Edge Computing in an IoT Context?

Edge computing means processing data close to where it's generated — on the device itself, on a local gateway, or in a nearby on-premise server. Instead of sending raw sensor data hundreds of miles to a cloud data center, you analyze it locally and only transmit what's truly needed.

Think of it this way: a smart camera on a factory production line doesn't need to upload every frame to the cloud to do its job. It only needs to flag defects. That classification can happen in milliseconds at the edge — no internet required.

- Processing happens locally — on the device, gateway, or edge server

- Latency is minimal — sub-millisecond decisions are possible

- Works offline — operations continue even when connectivity fails

- Reduces bandwidth usage — only aggregated insights go to the cloud

What Does the Cloud Bring to IoT?

Cloud processing, on the other hand, gives you virtually unlimited compute power, long-term data storage, and the ability to run complex analytics and machine learning models across your entire dataset. Where edge excels at speed, cloud excels at depth.

If you want to correlate performance data from 10,000 machines across 50 factories to train a predictive maintenance model, you need the cloud. No edge device has that breadth of compute or storage.

- Unlimited scalability — no hardware constraints

- Global aggregation — data from all devices in one place

- Advanced ML & analytics — train models across the full dataset

- Lower upfront hardware costs — no need for on-site servers

The Six Key Factors That Should Drive Your Decision

Most real-world IoT systems don't fit neatly into "edge only" or "cloud only." The right architecture depends on your specific requirements across six dimensions:

1. Latency Requirements

If a decision must be made in under 100 milliseconds — think industrial safety shutoffs, autonomous vehicle responses, or real-time quality control — the cloud is simply too slow. Edge is mandatory. If your use case can tolerate a few seconds of lag, cloud becomes viable.

2. Bandwidth and Connectivity

Sending raw video, audio, or high-frequency sensor data to the cloud is expensive and often impractical. Edge pre-processes data and transmits only aggregated summaries, reducing bandwidth costs by 80–90% in many deployments.

3. Data Privacy and Compliance

Healthcare, financial services, and government deployments often have strict rules about where data can travel. Edge computing keeps sensitive data on-premise, making compliance far simpler and reducing the attack surface.

4. Processing Power Needs

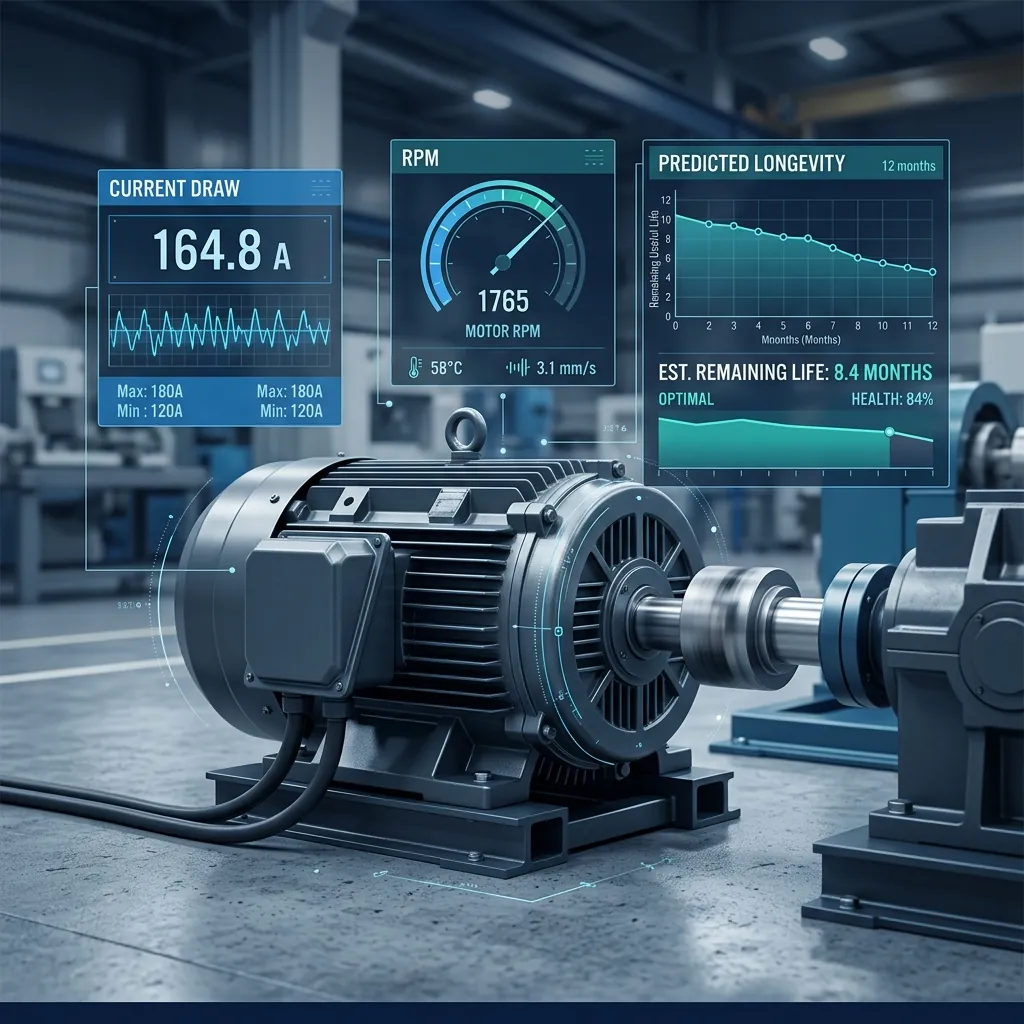

Lightweight inference (classifying sensor readings, detecting anomalies) runs well on modern edge hardware. But training new models, running complex simulations, or analyzing historical trends across millions of records requires cloud-scale compute.

5. Resilience and Uptime

If your internet connection is unreliable — common in remote industrial locations, agricultural fields, or maritime environments — an architecture that depends on the cloud for every decision becomes a liability. Edge-first deployment with cloud sync keeps operations running regardless of connectivity.

6. Total Cost of Ownership

Edge requires upfront hardware investment. Cloud has ongoing per-GB or per-compute costs. For high-volume, always-on IoT deployments, edge often wins on total cost over a 3–5 year horizon. For variable or low-volume workloads, cloud-on-demand is usually more economical.

The Hybrid Architecture: Best of Both Worlds

The most sophisticated IoT deployments don't choose — they layer. A hybrid edge-cloud architecture assigns workloads to where they're best suited:

- Edge layer: Real-time decisions, local alerting, data filtering, privacy-sensitive processing

- Cloud layer: Long-term storage, advanced analytics, model training, cross-site dashboards

- Sync mechanism: Only aggregated, anonymized, or anomalous data moves from edge to cloud

A retail chain using computer vision, for example, might process in-store video feeds at the edge (to avoid uploading sensitive footage) while sending only foot traffic counts and conversion metrics to a central cloud dashboard.

"The question is no longer edge vs. cloud — it's about deciding which intelligence belongs where. The best IoT systems are intelligent at every layer."

How to Choose: A Simple Decision Framework

Use this quick checklist to guide your architecture decisions:

- Does the decision need to happen in under 500ms? → Must use Edge

- Is there a risk of connectivity loss? → Must use Edge for core logic

- Are you storing or analyzing months of historical data? → Cloud needed

- Are you training ML models? → Cloud for training, Edge for inference

- Are you managing over 1,000 devices? → Cloud management plane required

Getting the Architecture Right from the Start

Architecture decisions made early in an IoT project are expensive to reverse. A system that was designed to push all data to the cloud will struggle to retrofit edge intelligence without significant rework. Similarly, an edge-only system won't scale gracefully as your analytics needs grow.

At AdaptNXT, we help product teams design IoT architectures that are fit for purpose from day one — with the flexibility to evolve. Whether you're building a smart factory, a connected healthcare device, or an agricultural monitoring platform, we'll help you make the edge vs. cloud decision with confidence.

Want to discuss your IoT architecture? Talk to our team — we offer a free initial consultation.